Products

Solutions

Resources

AI is everywhere in modern business. From AI-powered chatbots handling customer queries to predictive analytics driving sales strategies, most organizations are adopting AI faster than you can say “algorithm.” But there’s a problem: many of these tools are being used with little to no governance in place.

According to a recent study by global law firm DLA Piper, 83% of companies today are using AI tools in some shape or form, yet only 86% of them have adopted an AI code of ethics. That means around 14% of organizations leveraging AI lack governance frameworks entirely. In some jurisdictions, it’s even more alarming: Over half of companies reportedly permit the use of AI without policies implemented to govern it.

For senior governance professionals like general counsels and corporate secretaries, C-suite leaders such as CIOs and CROs, and ultimately the board of directors — this isn’t just a potential compliance headache; it’s a risk landmine. Without oversight, AI tools can expose your organization to potential data breaches, algorithmic bias, and regulatory blowback from new legislations like the EU AI Act.

So, how do you bridge the gap? One sure-fire way to kickstart your AI governance strategy is to run a safe-to-fail AI tool assessment. This structured, low-risk experiment can help you:

Here’s an overview of how to run such an exercise, step by step:

Before you plan the exercise, take stock of where AI is already being used in your business. Chances are, more tools are in play than you think.

Example: Your marketing team may be using AI to generate content, while HR is screening candidates using AI-powered tools. Many of these may have been adopted independently, with little oversight or alignment with organizational policies.

Quick Tip: Use this audit to create a centralized record of all AI applications currently in use.

Your safe-to-fail exercise should target one specific AI use case. Think of it as a microcosm for broader governance challenges.

Goal: Use this exercise to uncover governance gaps that may apply across other AI tools.

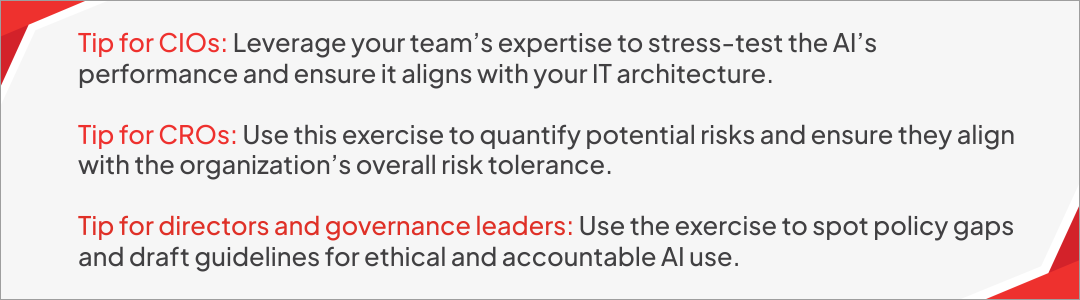

AI governance is a team sport. You’ll need the right mix of technical, legal, and operational expertise to run a meaningful experiment.

Here’s how to test the AI tool safely and effectively:

Example: If you’re testing an AI recruitment tool, analyze how it scores candidates and whether its recommendations show bias or reflect business priorities.

The experiment isn’t just about the tool — it’s about what it reveals about your governance approach. After the test, hold a debrief to answer:

Use this step to document insights that can inform your broader AI governance framework.

With lessons from the exercise, it’s time to go from reactive to proactive. Develop a framework that addresses:

Quick Tip: Consider forming a cross-functional AI governance committee to oversee these efforts and update policies regularly.

AI governance isn’t a one-off task. Use what you’ve learned to scale oversight across all AI tools and build ongoing monitoring processes.

Example: As your AI use evolves, your governance framework should adapt to cover new risks, such as emerging privacy laws or advances in AI capabilities.

AI adoption is no longer a choice — it’s an imperative to remain competitive. But without governance, it’s a reality fraught with risks. By running a safe-to-fail experiment, you can take control of your AI landscape, uncover governance gaps, and create a strategy that ensures accountability, compliance, and long-term success.

Whether you’re a senior GRC or legal professional, C-suite executive, or board director, your leadership is pivotal in building AI governance that safeguards trust, innovation, and value. As you take the first steps in running a safe-to-fail AI experiment and building smarter governance, remember that you don’t have to go it alone.

Drawing on years of hands-on experience with directors and GRC professionals — and backed by Diligent Institute's market-leading research — we’ve developed a comprehensive suite of best-practice educational resources to help you execute your experiment effectively.

Available exclusively through the Diligent One Platform, our Education & Templates Library includes:

Equip your organization with the tools it needs to confidently navigate the complexities of AI governance. With the right resources and guidance, you’ll move from experimentation to oversight with ease.